Opinion: The current “AI Glasses” are awkward and will inevitably transition to AR + AI Glasses!

Here is another blog by our guest author Axel Wong. His old blog post about Meta Orion AR Glasses is very popular, with over 143,000 people reading it. This time he gives a breakdown of the current AI Glasses hype with countless companies investing in this type of glasses with camera and audio input for multimodal AI models — but without a display, without multimodal output. Enjoy!

____

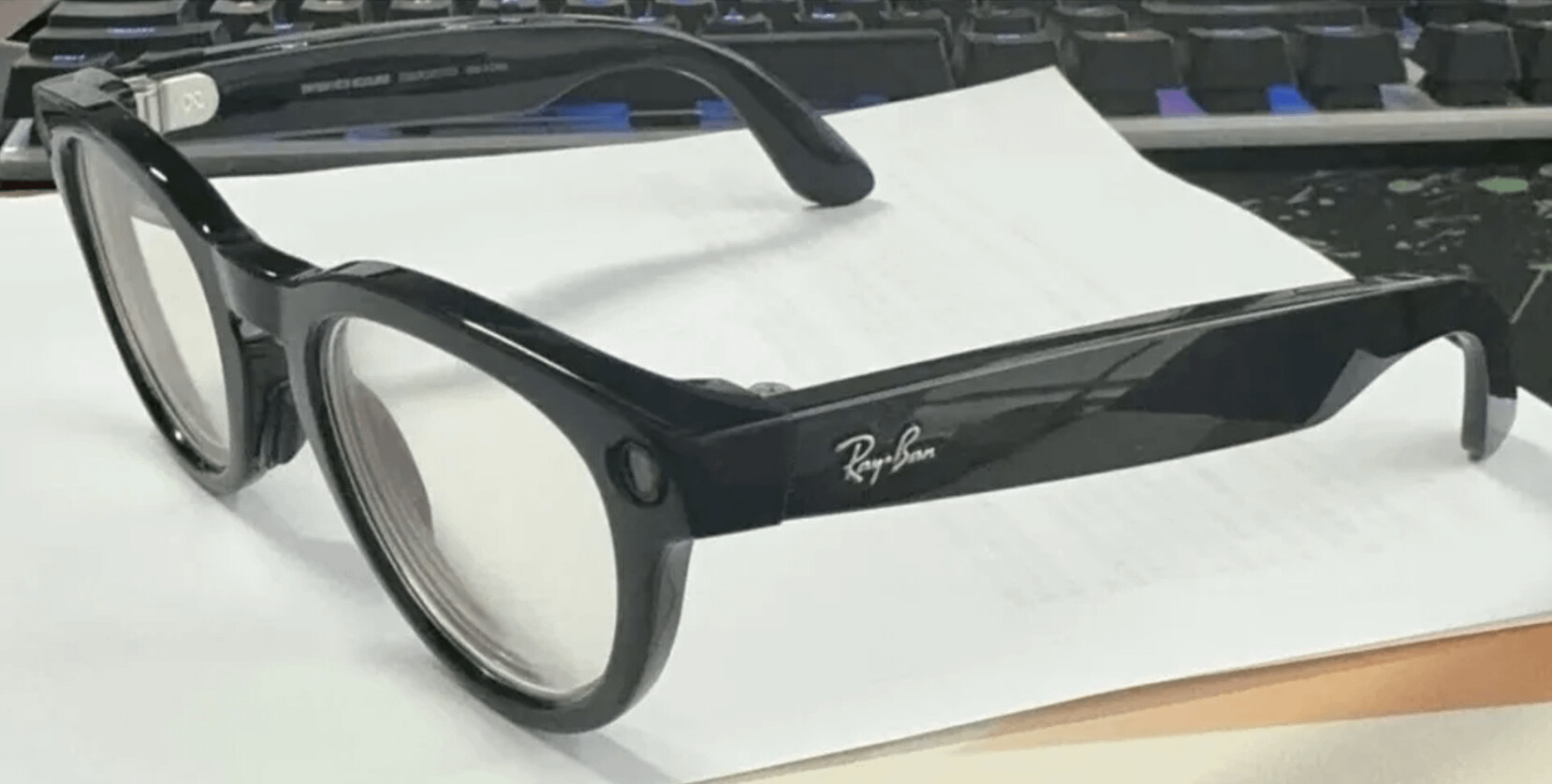

In the past year, the news that Meta’s Ray-Ban glasses sold over one million units has excited many companies. The so-called “AI glasses,” seen as a promising new product category, have been hyped up once again. On the streets of the U.S., it’s not uncommon to spot people wearing these glasses, which are equipped with dual cameras and audio functionality.

A large number of companies, including Baidu and Xiaomi, have rushed into this space, even attracting entrants from unexpected industries like power bank manufacturers. Rumor has it that Apple and Samsung are also eager to join the race. This sudden surge of enthusiasm reminds me of the smart speaker craze from years ago. Back then, over a hundred companies in Shenzhen were making smart speakers — but as we all know, most of them eventually stopped.

Ray-Ban AI Glasses

At its core, what we call “AI glasses” today are essentially glasses equipped with audio and camera capabilities. Bose was among the first to introduce audio-enabled glasses with its so-called BoseAR, which was essentially a pair of headphones in the form of sunglasses. Around the same time, Snap released its first-generation Spectacles, which allowed users to record short videos. I bought both out of curiosity at the time—but predictably, they’ve long since disappeared into a corner, gathering dust.

Clearly, the concept of adding sensors to eyewear isn’t new. So why do “AI glasses” suddenly seem fresh again? The answer is simple: large language models (LLMs) have entered the picture. The current buzz revolves around the idea of using LLMs on smartphones (usually via an app, like ByteDance’s Doubao) to “empower” these devices. You might think it’s just cameras and speakers, but no—this is AI-powered smart hardware! ![]()

OK, now that we’ve laid the groundwork, let’s get to the conclusion: In my opinion, today’s so-called “AI glasses” will inevitably transition (in the short term) to AR glasses. That means evolving from “audio + cameras” to “audio + cameras + near-eye displays.”

The Moment You’re Forced to Pull Out Your Phone, AI Glasses Lose the Game

This isn’t a criticism stemming from years of working in XR, nor is it a forced negative view of AI glasses. The issue lies in product logic: AI glasses without a display are fundamentally awkward and lack coherence. (For an analysis of why Ray-Ban glasses sell well, see the end of this article.)

For any product, the three most critical aspects are scenarios, scenarios, and scenarios.

AI Glasses with on-device AI processing

Let’s examine the scenarios for “AI glasses.” Take Baidu’s Xiaodu AI Glasses as an example. According to reports, they offer:

- First-person video recording,

- On-the-go Q&A,

- Calorie recognition,

- Object identification,

- Visual translation,

- Intelligent reminders.

When summarized, these features boil down to two core functionalities:

- Recognition + audio prompts for information (on-the-go Q&A, calorie recognition, object identification, visual translation, intelligent reminders).

- First-person video recording.

Let’s step back for a moment. How do we typically interact with AI today? Most of the time, it’s through a smartphone. The truth is, all the functions mentioned above can already be fully achieved with a smartphone screen and camera. AI glasses merely relocate the phone’s audio and camera capabilities to your head. Their biggest advantage is that you don’t need to take your phone out, which can be convenient in certain scenarios—such as when your hands are occupied (e.g., cycling or driving) and you need navigation or recording.

Now let’s consider the typical interaction flow between a user and AI on a phone. For example, when you want to know something, you ask the AI, and it responds with a long block of text, like this:

This is from Doubao; the Q&A itself is unrelated to this article, and only half the response is shown.

As you can see, the response is full of text. Most of the time, we don’t have the patience to listen to the AI read the entire thing aloud. That’s because the brain processes text or visual information far more efficiently than audio. Often, we just skim through the text, grasp the key points, and immediately move on to the next question.

Now, if we translate this scenario to AI glasses, problems arise. Imagine you’re walking down the street wearing AI glasses. You ask a question, and the AI responds with a long-winded explanation. You may not remember or even care to listen to the entire response. By the time the AI finishes speaking, your attention or location may have shifted. Frustrated, you’ll end up pulling out your phone to read the full text instead.

Moreover, there’s the issue of interaction itself: audio is inherently a “laggy” form of interaction. Anyone familiar with real-time interpretation, smart speakers, or in-car voice assistants will know this. You have to finish an entire sentence for the AI to process it and respond. The response might often be incorrect or irrelevant—like answering a completely different question.

(For more on this issue, see my earlier article: “The Media and Big Thinkers Are Hyping a New AI+AR ‘Unicorn,’ But I Think It’s Better Suited for Street Fortune-Telling.”)

This means there’s a high likelihood that:

- You spend a long time talking to the AI, and it doesn’t understand you.

- You find the response too slow, so you pull out your phone to type the command yourself.

- You feel the AI is rambling, so you take out your phone to skim the full text.

- Privacy concerns arise—you wouldn’t want to use voice commands to ask the AI to send a flirty message to your girlfriend in a public place.

In the end, the moment you’re forced to pull out your phone, the significance of AI glasses drops to almost zero.

After Audio, Let’s Talk About Cameras

A person holding a phone up in the air to take a picture

Admittedly, having a camera on your head provides a more elegant option for taking photos. Personally, I’m not a fan of taking pictures, for two main reasons: first, pulling out a phone to take a picture feels awkward and inelegant to me; second, it often seems disrespectful to the person speaking to you (for example, even if you’re using your phone to record what they’re saying, it can still come across as rude).

But I wonder how many people who use glasses for photography are genuinely taking photos in their daily lives. When photographing people, objects, or scenery, you typically need to rely on the framing guidelines provided by a phone’s viewfinder. Often, you might need to crouch or adjust the angle to capture the perfect shot—something that AI glasses in their current form are almost incapable of doing. And let’s not forget that the camera quality of AI glasses is inevitably far inferior to that of a smartphone.

Of course, many might argue that these glasses are mainly designed for first-person video recording or quick snapshots. To that, I can only say: if you have absolutely no expectations for the quality of your footage and just want to casually capture something, then yes, AI glasses could be somewhat useful. However, the discomfort of “not being able to see what you’re recording while you’re recording it” is likely to bother most people. And in the vast majority of cases, these functions can be completely replaced by a smartphone.

It all comes back to the same point: the moment you’re forced to pull out your phone, the significance of AI glasses drops to almost zero.

AI + AR Will Streamline the Entire Product Logic

Why do I say that AI glasses will inevitably transition to AR glasses in the near future?

To make it easier to understand, let’s stop calling them “AR glasses” for now. Instead, think of them as “Siri with near-eye displays for text and images” (I’ll call this “Piri”). This term captures the core concept better.

Let’s go back to Baidu’s AI glasses as an example. Looking at their own promotional materials—take a close look at these images—anyone unfamiliar with the product might think these are advertisements for AR glasses. (They even include thoughtfully designed AR-style UI elements. ![]() )

)

Frames from Baidu’s promo video for the Xiaodu AI Glasses

Frames from Baidu’s promo video for the Xiaodu AI Glasses

From these images alone, it’s clear that once near-eye display functionality allows AI-provided information to be presented directly—even if it’s just monochrome text—the entire product logic suddenly makes sense.

Let’s revisit the scenarios we discussed earlier:

- Recognition + audio prompts for information: With near-eye displays, text information can now appear directly in the user’s view, making it instantly readable. What used to take minutes to listen to can now be grasped in seconds. Additionally, AI could automatically generate memos that float in your field of view, ready to be checked at any time (ideally disappearing after a short period).

Translation functionality also becomes more convenient for the wearer. While it’s not perfect (you can’t guarantee the other person is also wearing similar glasses), the vision of widespread AR adoption is precisely what the industry is striving for, right? ![]()

- Photography: A simple viewfinder on the side could let users see what they’re capturing. This provides guidance and resolves the issue of blindly taking photos or videos.

This type of product doesn’t have to stick to the traditional shape of ordinary glasses. Monochrome waveguides could easily handle the basic functionality of Baidu’s AI glasses. Moreover, combining them with traditional optical systems (such as BB/BM/BP geometrical optics) could open up entirely new scenarios—like virtual companions (imagine a virtual Xiao Zhan accompanying you to watch a movie) or interactive training (a virtual tutor practicing a foreign language with you face-to-face). These are scenarios that display-limited waveguides struggle to achieve effectively.

AI Powers AR But Can’t Solve All Optical Challenges

While AI’s capabilities enhance the potential of AR glasses, they can’t unify the variety of optical solutions in AR glasses. For instance, AI cannot improve the display quality of certain optical designs, like waveguides. However, it can add more functionality to existing AR products:

- For waveguide-based glasses, AI could resolve the lack of compelling use cases, turning them into more practical tools.

- For BB-style large-screen AR glasses, AI might not only enrich their features but also address their current dilemma: difficulty justifying a high price tag (it’s almost like selling at a loss just to gain attention).

Additionally, this combination might spur the development of entirely new optical systems, potentially leading to innovative product categories.

Here’s an old concept model from 2018 (apologies for the rough design). ![]()

From this image, you can see how this type of product fundamentally differs from today’s large-screen AR glasses. The latter, positioned as “portable large screens,” are more akin to plug-and-play ‘glasses-shaped monitors.’ In contrast, AI + AR glasses would emphasize the practicality and usability of the app ecosystem. These two types of devices have completely different design and development philosophies.

This is also why current waveguide + microLED glasses haven’t gained widespread acceptance. Most of them are simply following the design philosophy of large-screen glasses, stacking hardware to achieve near-eye displayswithout thoroughly refining the app ecosystem. Some even fail to deliver decent hardware performance.

The Path Forward: AI Glasses Transitioning to AI + AR Glasses

Looking ahead, we can predict that companies making AI glasses today will face mixed market feedback:

- Those that entered the space blindly, without understanding the product’s core value, will likely abandon it altogether.

- Companies serious about developing a viable product will eventually incorporate display functionality, transitioning to AI + AR glasses.

Blindly following trends is meaningless and often leads to dead ends. But for those willing to innovate, AI + AR is the natural evolution of AI glasses.

Blindly Following Trends Is Pointless and Often Leads to Pitfalls

That brings us back to the question: Why have Ray-Ban’s smart glasses sold so well?

Ray-Ban AI Glasses

In my opinion, the success of Ray-Ban’s smart glasses lies in a pragmatic commercial strategy. Let’s break it down:

- The strong brand appeal of Ray-Ban:Ray-Ban is a well-established mid-to-high-end eyewear brand with strong recognition in the consumer market, especially in the United States.

- Extensive offline retail channels:AI glasses are hardware products and a new category, which makes them hard to sell online alone. Ray-Ban’s robust offline retail network allows users to try the glasses in-store, significantly increasing the likelihood of a purchase.

- Reasonable pricing:The price of Meta’s smart glasses is comparable to that of regular Ray-Ban sunglasses. For consumers who were already planning to spend this much on sunglasses, adding a few trendy features makes it an easy upgrade.

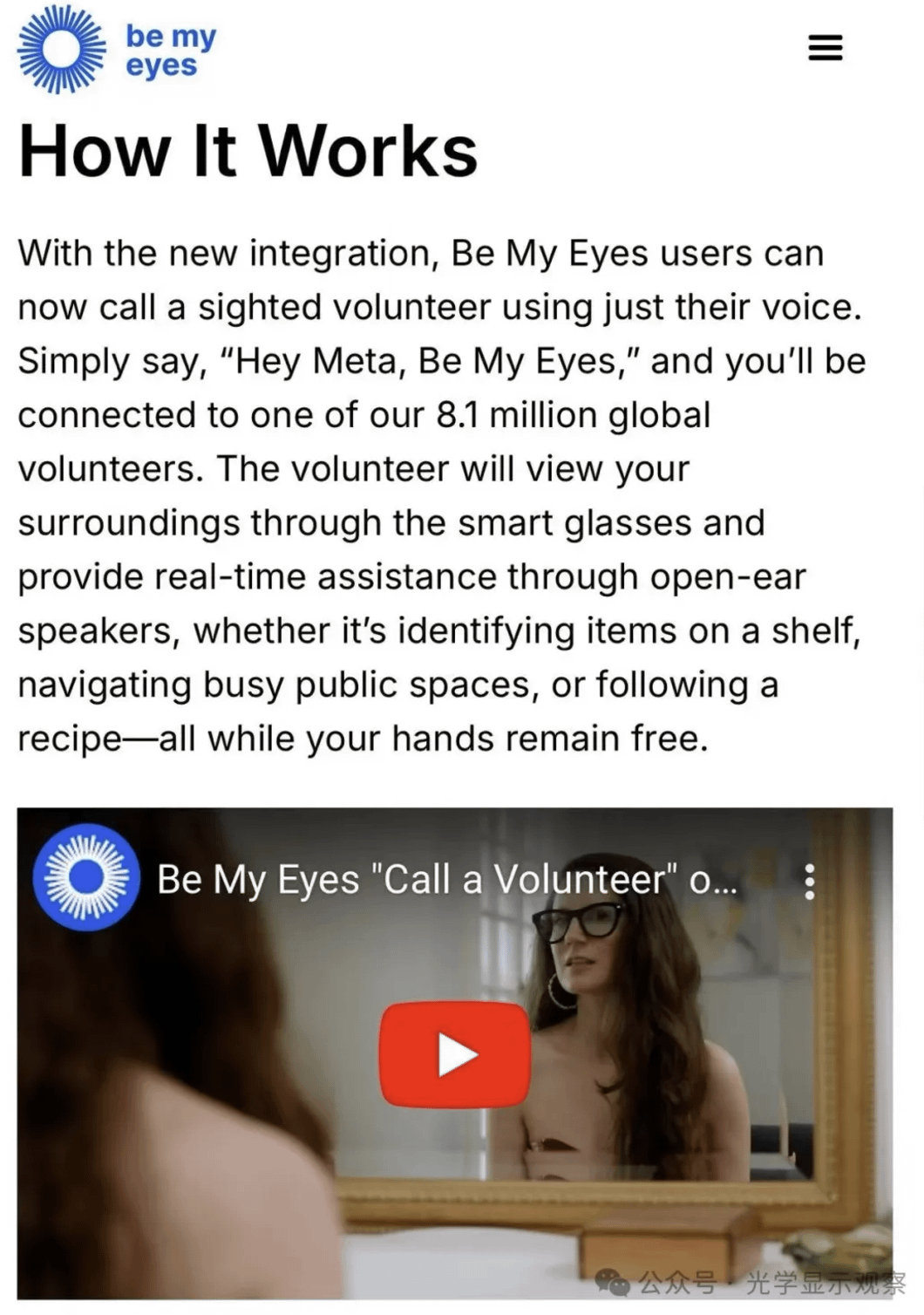

- Practical applications for certain users:Some users genuinely benefit from first-person video recording, such as livestreamers who wear the glasses for hands-free filming or visually impaired individuals who use apps like Be My Eyes.

- Most importantly: Even if users stop using the AI or camera features after a month or two, the glasses remain a stylish and functional product. They are something you can confidently wear out in public—or even enjoy wearing daily—purely as eyewear.

In summary, the success of Meta’s Ray-Ban smart glasses has little, if anything, to do with AI or AR. It may not even have much to do with their functionality. Instead, it’s a combination of brand strength and a well-thought-out product positioning. It’s also worth noting that Meta only achieved this after two product iterations; the first-generation Ray-Ban Stories had lackluster sales.

Be My Eyes app, now available on Ray-Ban glasses

For example, the Be My Eyes app, now available on Ray-Ban glasses, allows visually impaired individuals to connect with a network of 8.1 million volunteers. These volunteers can use the glasses’ camera feed to view the wearer’s surroundings and provide instructions via audio.

Lessons for AI Glasses in the Chinese Market

It’s clear that some Chinese companies are trying to replicate this model by partnering with eyewear brands like Boshi or Bolon. However, this approach may not be enough because the Chinese consumer market is vastly different from the U.S.. How many people in China are willing to spend over a thousand yuan on a pair of sunglasses? Not many. Personally, I wouldn’t. ![]()

If companies want to make the AI features compelling enough for consumers to buy, the next logical step is to transition to AI + AR.

_____

Meta’s Next Steps: Toward AI + AR Glasses

In my article “Meta AR Glasses Optics Breakdown: Where Did $10,000 Go?”, I mentioned rumors that Meta plans to release glasses with waveguide technology in 2025. The optical design is said to use 2D reflective (array) waveguides paired with LCoS projectors.

While this optical design is likely a transitional step, the evolution from “audio + cameras” to “audio + cameras + near-eye displays” is a sound and logical progression for AI glasses.

A Final Note: The Risks of Blindly Following Trends

The consequences of blindly copying others are often dire. Take Apple’s Vision Pro as an example. When it was first released last year, I predicted it would fail (see my article “Vision Pro Is Not a Savior but Apple’s Cry for Help”).

The core question that every product must answer remains the same: What are people going to use this for?

Vision Pro’s biggest issue isn’t its hardware—it’s the severe lack of content. VR has always been heavily reliant on PC/console gaming ecosystems. Even with Vision Pro’s impressive hardware specifications, it’s essentially useless without content. For the companies that are still copying Vision Pro (I know of several), what’s the point if you don’t have a robust content ecosystem? ![]()